Det. Eng. Weekly #126 - live laugh logs

every SOC should have this over their kitchen tables

Welcome to Issue #126 of Detection Engineering Weekly!

📣📰🌐 Now accepting sponsors!

The newsletter is growing, and I’m excited to announce that I am accepting sponsors to place ads in issues of Detection Engineering Weekly.

You’ll notice some Issues containing a sponsored ad section at the top of the newsletter. Each Weekly is always free, so nothing changes there.

I have some constraints on who I work with, so if you are interested in collaborating with me, please send me a message via email by clicking the button below.

⏪ Did you miss the previous issues? I'm sure you wouldn't, but JUST in case:

💎 Detection Engineering Gem 💎

The Tyranny of False Positives by Michael Taggart

I’ve battled my whole career with false positive alerts. The visceral allergic reaction that these cause to analysts, detection teams and customers can make you feel like the worst person in the world. It’s like taking a production database down (which I’ve also done), but instead of booting it back up, you are reminded that your customer will never get that time back, so it feels way more existential.

Detection efficacy, which I discussed extensively in Field Manual #3, is my attempt to describe the usefulness of an alert based on its accuracy, triage capacity, and risk tolerance. But, after reading Taggart’s blog on efficacy, I think I’m a believer in their proposed Admiralty-code-like approach to efficacy. And, Taggart is talking about game theory, so I guess I owe him a beer if we ever meet at a conference.

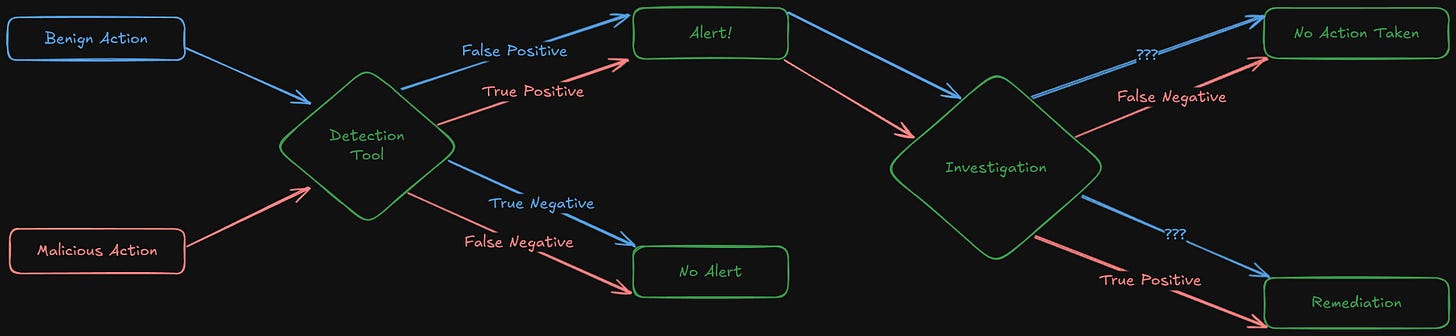

What you see above is Taggart’s approach to the usefulness or efficacy problem in Detection Engineering. It’s somewhat of a homage to One-shot games, with the idea that you perform one action-to-investigation traversal per alert. However, note that the outcomes aren’t TP/FP/TN/FN labels; instead, they are actions taken after an investigation. This framing makes it much easier for detection teams and leadership to understand the value of alerts, rather than relying on ratios and MITRE coverage.

At the end of the post, Taggart proposes an Admiralty code-like labeling system with a sprinkle of CVSS to help capture these path traversals. It’s interesting in the sense that it fills a measurement gap in a much higher dimensionality than TP/FP. Still, it can definitely be harder to parse for the normies outside of detection circles. Even so, taking this labeling system and encoding it into higher-level metrics can likely provide detection and incident teams with trends on their alerting strategies.

🔬 State of the Art

Architecting Your Detection Strategy for Speed and Context by Jack Naglieri

The most significant jump in understanding I have found with detection engineers is when they start differentiating alerting strategies based on the behaviors they aim to catch. For example, conceptually understanding that a connection to a known malicious domain should result in an immediate alert and a subsequent blocking action happens quickly in an operational environment. But, what if the domain is not marked as malicious, yet the traffic looks suspicious? What does suspicious actually mean in this context?

Jack’s blog explores these concepts in detail. Detection strategies emerge when the behavioral or contextual information becomes a critical decision point for finding threat actor activity. Atomic detections, according to Jack, are akin to the idea of the malicious domain. Still, other behaviors, such as malicious cronjobs, cURLing an unknown binary on a user session, or adding malicious email forwarding rules, all make sense to alert in isolation.

More sophisticated behaviors or malicious behavior that looks benign are when this gets complicated. Risk-based alerting strategies can help with this, but adding contextual (who is this person? Should they be using the command prompt while being in Finance?) and historical (does this engineer usually log in to all production databases off hours?) creates a more robust approach to threat detection.

Jack goes into deep detail about these strategies and provides numerous excellent examples, both in scenarios and in rules, for others to follow. At the end of the blog, he makes some excellent points in how LLM and agentic approaches to investigation alleviate this problem even more, and it tops it off with an example playbook for readers to take home and try in their own environments.

What Framing Security Alerts as a Binary True or False Positive is Costing You by David Burkett

This False Positive blog by Burkett offers an excellent perspective that should be combined with the reading from Taggart. I like how he frames false positives from a multi-environment point of view, rather than focusing on a single organization. The example he uses revolves around an MSSP that deploys a rule to monitor remote monitoring and management (RMM) tools:

Customer A uses RMM Tool AnyDesk for normal IT operations.

Customer B uses RMM Tool ConnectWise for normal IT operations.

Your rule triggers whenever AnyDesk is detected.

For Customer A, the alert is technically correct, your detection fired on exactly what it was built to detect. Under SDT, that’s a true positive. But operationally, it has no security impact because it’s part of daily business activity.

For Customer B, the same detection could be suspicious or outright malicious. Same rule. Same accuracy. Very different impact.

This scenario demonstrates a key concept he explains in the next section: intent. If a rule is accurately catching AnyDesk but Customer A uses AnyDesk, then the intent was captured and isn’t relevant for Customer A. If the intent of the rule is to catch malicious use of RMM tools, then Customer B would benefit from this rule because they don’t use ConnectWise or any RMM for that manner. Intent, according to Burkett, captures the full lifecycle of an alert from generation to investigation. This inclusive approach helps teams tune rules to their environmental context rather than the technicalities of catching behavior.

The Fragile Balance: Assumptions, Tuning, and Telemetry Limits In Detection Engineering by Nasreddine Bencherchali

Detection writing is a practice of informed assumptions. I love this framing by Nasreddine, because it captures the detection engineering condition that lots of us live with everyday. In this blog, tuning is front-and-center as his approach to solving false positives. But tuning is more than just adding suppression lists or changing a field in a query. For example, typical behavior of a user or an environment can influence how you tune the rule. Another example is the lack of telemetry: do you have all the necessary context before you can make an alerting decision? He lists several fields from Sysmon Event ID 11, but those fields may not give a detection engineer enough information to make an informed decision.

He ends the blog with my favorite section, “Choosing the Right Fix.” Basically, it boils down to the capacity of the security team and the detection engineer. Was the assumption on the behavior too uninformed or not tailored to the environment? Is the risk tolerance for this specific event so low that it needs to be aggressively filtered? This is a fantastic tactical look at false positives because it helps give us a methodology behind the problem.

Using Auth0 Event Logs for Proactive Threat Detection by Maria Vasilevskaya

The Auth0 team at Okta just launched a new detection rule set to help protect Auth0 customers. I love the framing of their release because it focuses on several personas who could use these detections. I’ll link the full repository below, but if you take a look at the rule Refresh Token Reuse Detection, the team put in excellent metadata. The explanation, comments and prevention fields have plenty of commentary for folks to internalize these detections and understand the intent behind each one.

Detecting ClickFixing with detections.ai — Community Sourced Detections by mikecybersec

It’s cool to see how community sourced detections are coming together on a platform other than GitHub! In this post, mikecybersec explores how the community website, detections.ai, can be leveraged when reading a threat intelligence report on ClickFix. Detection ideation and research is one of the most fun parts of detection engineering, and in this specific blog, mikecybersec references MSTIC’s latest ClickFix blog as the their source of inspiration for finding rules. They search through the community portal for ClickFix, pick out some candidate rules, added their own tuning and began testing.

☣️ Threat Landscape

iOS 18.6.1 0-click RCE POC by b1n4r1b01

The big vulnerability research news this last week is Apple’s out of band security patch for CVE-2025-43300 0-click RCE vulnerability. An attacker can build a specially crafted picture leveraging Apple’s image decompression library and achieve remote code execution on a victim device. It was hard finding technical details on this one, but several news articles linked to b1n4r1b01’s implementation here. I couldn’t verify the PoC here.

Falcon Platform Prevents COOKIE SPIDER’s SHAMOS Delivery on macOS by Maddie Stewart, Suweera De Souza, Ash Leslie, and Doug Brown

Who would win in a fight, a trillion-dollar company named after a fruit, or one piece of malware leveraging a password prompt to bypass GateKeeper? Researchers at CrowdStrike uncovered an extensive ClickFix campaign targeting macOS victims to deploy a version of AMOS Stealer. The campaign leverages a combination of malvertising and social engineering techniques to get victims to run a malicious bash command, which subsequently installs the malware. This leads to infections that steal secrets and provide initial access, similar to other infostealer families on Windows.

ghrc.io Appears to be Malicious by Brandon Mitchell

I am both impressed and terrified by how creative attacks emerge from the software supply chain ecosystem. This ecosystem accelerates to keep up with the demand of developers shipping software, and with that acceleration, leaves open all kinds of interesting attack paths inside a network. Mitchell found an attack path leveraging an old school attack that appears to still work to this day: typosquatting. This typosquat domain abuses a keyboard slip that can result in a compromise via a malicious container from GitHub’s container registry.

How Spur Uncovered a Chinese Proxy and VPN Service Used in an APT Campaign by Spur Engineering

I linked a story two issues ago about a leak of DPRK operations from a security researcher. The Spur team took that leak and combed through for Kimsuky infrastructure to see if they could identify and fingerprint any type of proxying or VPN service leveraged in their campaigns. This is a great example of a threat intelligence pivoting blog that started with a single IOC and uncovers a large swath of infrastructure used to carry out attacks.

Agentic Browser Security: Indirect Prompt Injection in Perplexity Comet by Artem Chaikin and Shivan Kaul Sahib

Vulnerability writeups against Agentic products reminds me a lot of the early days of security where buffer overflows were the norm. These are now taught in “basic” security courses, and it’s almost laughed at because there are so many protections against taking untrusted input into a privileged context seems like a given. This same class of vulnerabilities from two decades ago are now rearing their ugly heads into the implementation of LLMs in everyday tools.

Brave researchers found this exact problem in Perplexity’s Comet browser. They managed to achieve prompt injection of Comet’s LLM handler, where a specially crafted webpage (read: just a freakin’ prompt) can influence how Comet behaves. Their PoC involves doing some scary things with a personal email address and Comet’s login page.

🔗 Open Source

GitHub enumeration and investigation tool built by my colleague Christophe Tafani- Dereeper. You provide a GitHub username, and it’ll use several OSINT heuristics within the repository and GitHub as a whole to uncover intelligence around the account.

This is a cool interview take home exercise from the Adobe security team. I’ve gone back and forth on take home exercises, because they can sometimes be used to do free work for the company, but this one looks like an interesting and timeboxed challenge.

Detection rules from the auth0 announcement above. It has excellent documentation, both in the rules and in the README on the main page. You can run Sigma over all of these rules to convert to several formats.

Yet another open source post-exploitation toolset. Of course, it says for educational use only, though you can never trust the bad guys using open source tools just to learn.