DEW #151 - The Security Cognitive Rust Belt, Music Streaming Fraud & the Axios Incident Post-Mortem

And the Sabres make the playoffs :3

Welcome to Issue #151 of Detection Engineering Weekly!

✍️ Musings from the life of Zack:

I tried to visit my hometown over the weekend, but my flight was canceled before I could leave. I did my first solo road trip in probably years. Maybe it’s an American culture thing, but I didn’t mind the 6.5-hour drive. Lots of music, podcasts, and sitting with your thoughts

It’s always strange going back to your hometown and seeing how much has or hasn’t changed. For example, it’s almost mid-April, and I drove into snow :(. But pizza & chicken wings are so much better in NY than in New England so I hope that never changes

I’ve been reading about Daniel Miessler’s PAI project, and I’m quite impressed with the idea of using AI for Personal Augmentation. Rather than having several Claude Code sessions or optimizing ways to integrate into Gmail or Calendars, you can use this almost like an extension of yourself. It learns your motivations, wishes, and tool-stack preferences, and even tries to configure its personality so you enjoy working with it. This is definitely my project for the next several weeks

Sponsor: Nebulock

Automate the Tedious Parts of Your Hunting Workflow

The hardest part of threat hunting isn’t running queries. It’s knowing what to look for, why it matters, and whether your environment is exposed.

Distilling reports, mapping TTPs, and translating into behavioral indicators is where time disappears. Vespyr, Nebulock’s autonomous hunting agent, handles the reasoning layer. Findings are tied to your stack, your data, and your exposure profile, so every result is relevant to your environment and ready for the judgment calls only you can make.

💎 Detection Engineering Gem 💎

The Implementation Blind Spot | Why Organizations Are Confusing Temporary Friction with Permanent Safety by Chris St. Myers

This is an excellent commentary on the risks in the adoption curve of AI and Agents in security. It’s easy to get overwhelmed by the noise of marketing, fear, uncertainty, and doubt about security. On the one hand, we are hearing about so many companies adopting AI to increase productivity, sell products, and, more often than not, citing its use to justify layoffs. On the other hand, AI doomers claim that this technology will ruin our careers by automating us away. Like most things in life, the answer is probably somewhere in the middle, but we need to make sure we understand the risks.

We are all fortunate to be standing on the shoulders of giants. We know what a good security product, alert, or workflow looks and feels like. AI is too nascent for us to forget how much we’ve had to practice learning our craft with deterministic tools like Wireshark, the command line, and SIEMs. St. Myers warns, though, that we are at risk of forgetting. He compares and contrasts this with the massive adoption of technologies like the cloud, where we retained the analytical capabilities of security people and anyone in technology, because it was a deterministic shift in architecture. We still needed to understand and synthesize information to help automate tasks.

We are not just changing the pipes; we are changing who (or what) processes the data.

But, for AI, it’s non-deterministic, and that’s by design. And the ‘who’ in the quote here is important. St. Myers calls this risk the “cognitive rust belt”. We aren’t farming out architecture, building, or repetitive tasks to AI; we are farming out analytical capabilities. It’s a gradual hollowing out of analytical capabilities, as if we were all handed a junior analyst to synthesize data for us, and all we read are prompt responses.

Here’s how it relates to detection and response:

We’re building out increasingly complex detection technology, but we risk losing the understanding of why those detections matter, and how we can investigate when they fail

For AI-generated triage, are we slowly removing the “approved by an analyst” workflow? What parts of D&R will we lose agency to AI?

If we solve SOC analyst burnout with AI, which is great, what do we lose in the process? How else can they learn the field if they don't sit down and work through alerts?

They have been living inside summaries, not raw telemetry.

These are paradoxes in detection engineering, but honestly, it applies to every place trying to replace or accelerate human analysis with AI. We have to find ways to train and retain this expertise in an analytically rigorous profession. The prompts will be tuned and perfected, direct feedback on results will become more opaque, and we run the risk of understanding the how underneath the hood. When we enter the rust belt, it’ll be harder to trust the output of LLMs without trusting that we have the expertise to judge them.

🔬 State of the Art

I fell in love with Darknet Diaries years ago, probably starting with the Carbanak Episode. It’s cool to learn about the intermix of pure cybersecurity, professional stories, and security-adjacent stories through Jack’s storytelling. In this episode, Jack interviews the CEO of BeatDapp, who first started out as a fraudster in the BlackHat/GrayHat SEO realm. They began as a marketing firm but are now a fraud-prevention platform for the music industry. There are SO many parallels to security.

Fraud impact is directly measurable to impact (loss prevention), and bad guys are extremely persistent in finding ways to perform fraud

Many techniques to perform that fraud involve security means, such as compromising individual accounts all the way to compromising streaming services to skim money from payouts

Detection rules range from basic heuristics to machine learning, and clustering activity is a huge part of finding fraud

I also learned a few things about the streaming platform’s business model after this. Advertisers pay apps like Spotify or Apple Music for ads, and the money goes into a single pool each month. The streaming services then take all the listen counts by artist, sum them, and divide them across artists to create pizza slices (percentages) showing how each contributed to that sum. Then they carve out a portion of the ad revenue to pay artists and divvy up the payments according to those percentages.

So, if you compromise an artist or the streaming services, and you can take money off the top of those payouts, you can make a lot of money.

Fascinating stuff!

A Detection Researcher Mindset by Scott Plastine

It always fascinates me to find posts like this one by Plastine that outline their mental model in how they approach research and detection ideation. Detection ideation typically begins with a news story or a research blog post that (hopefully) contains enough technical detail to initiate the process. Then, you should deconstruct this information into components around capabilities, environmental context, existing coverage, and feasibility. This is easier said than done, so Plastine splits this into seven steps, with, funny enough, the last step being to write the detection.

They first start with understanding the technique and what normal behavior looks like in the context of the attack. A lot of people jump straight into writing rules without properly investigating whether this is even relevant to their environments. If it is relevant and you do understand the attack, you must then see whether you have the necessary telemetry for your rules to fire.

My favorite step in this blog, though, is under “is prevention possible?” A metric we can all obsess over is rule count and coverage, and making sure they go up. More rules is more coverage and more attacks, right? As an industry, I think we need a separate metric that accounts for cases where we remove rules because we implemented a technical control to limit the attack path altogether. Seeing Plastine call this out as a possibility in rule development means teams obsess less about hitting coverage metrics and more about recommending and implementing security controls that make all of our lives easier.

SITF: The SDLC Infrastructure Threat Framework by Wiz Research

We can’t always wait for MITRE ATT&CK to release new frameworks so quickly; many great research and security teams can help fill that gap with their own ATT&CK-style frameworks for everyone in the industry. The SDLC Infrastructure Threat Framework, or SITF, helps solve that gap. Here are some gaps and features they address:

They list five components of potential victim infrastructure: Endpoint, VC, CI/CD, Registry & Production. You can see these being attacked in every supply chain attack in the last two weeks surrounding Trivy & Axios

Three stages, Initial Access, Discovery & Lateral Movement and Post-Compromise, connect to ATT&CK, sans post-compromise

The techniques are specific and actionable. For example, Git Tag Manipulation was used in the Trivy attack as tags were removed and re-added with an orphaned commit on a fork in the attacker’s repo

Each technique has protective controls associated with them, so this is great reference material for those who are trying to harden their supply chain pipelines.

PR3TACK by Atlassian CSIRT

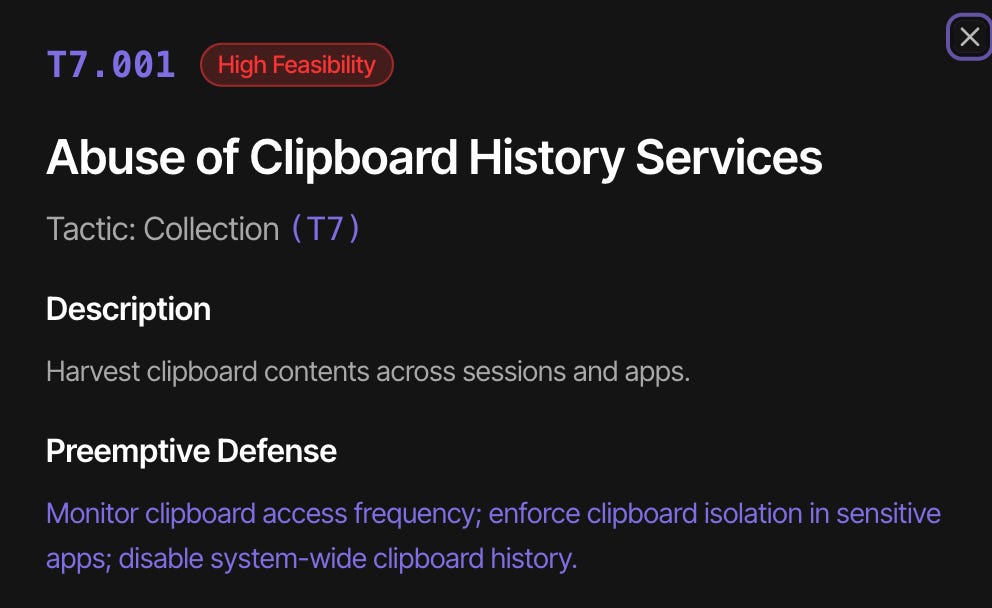

The Preemptive Tactics & Countermeasures Knowledgebase (PR3TACK) is an ATT&CK-style lexicon of tactics and techniques that highlight theoretical or “hard to observe” attacks. It’s a bit hard to understand at first, but once you dig into their matrix, there are some interesting entries. For example, the following collection technique:

There is malware that abuses clipboard content theft, so it makes sense that operating systems have mechanisms to cache history in some fashion. Each technique has a preemptive defense section, and in this case, it states there is no effective way to detect this type of attack due to a lack of telemetry.

It also introduces eight unique tactics that “extend beyond traditional technical compromise into governance, cognition, and sociotechnical domains.” There are supposedly longer descriptions for each one, but it either seems like the website doesn’t have a page to navigate to or my Brave browser is broken :3.

☣️ Threat Landscape

Axios Post Mortem by Jason Saayman

The owner and victim of the Axios supply chain attack last week published a great post-mortem on GitHub issues. Not much new information was shared, but you can tell they took the attack seriously and were an unfortunate victim to a convincing social engineering attack likely led by DPRK operators. They could have taken some steps to prevent this from happening, such as:

Removing long-lived tokens for publishing out-of-band versions

OIDC-style publishing to issue short-lived tokens and force releases through GitHub

Immutable-builds: this can mean many different things, but pinning to a specific version of axios that uses bundleDependencies, for example, can prevent consumers of axios from pulling in updated malicious versions

Even if Axios hardened their build pipeline with the above bullets, th

Attackers Are Hunting High-Impact Node.js Maintainers in a Coordinated Social Engineering Campaign by Sarah Gooding

Following the Axios breach and the subsequent post-mortem above, Socket.dev researcher Gooding collected several notable open-source maintainer posts about how they were contacted by the same threat actors in the same campaign. It’s good to see the openness of many of these maintainers to share their stories. It brings transparency to the situation and a sense of community that they are all in this together. It’s bad to see how wide DPRK cast their nets and have succeeded with at least one victim.

These developers are all self-selecting, meaning many more likely got these phishing emails and Slack invites. I’m unsure if there were any more victims, but I wouldn’t be surprised.

I have to apologize to you all. I listened to lots of podcasts on a long drive over the weekend, and this one stuck with me in particular because of its coverage of the war in Iran. The U.S. military industrial complex has warned of a “Cyber 9/11” event since I’ve been in the industry. The idea is a thought exercise in which a single cybersecurity breach or attack can trigger massive kinetic effects without a nation-state ever leaving its computer screens.

It’s a term that’s been made fun of relentlessly. Nation-states have effectively used these capabilities as spying tools, which they are very good at doing. But, starting with the Russia-Ukraine war, we’ve seen attacks mounted that have crossed that threshold. In Iran, there have been reports of Iranian actors using compromised devices to perform Battle Damage Assessments, as well as using them for targeting for a strike.

This is where I see security being relevant in a more modern environment. The grugq and Tom Uren have an excellent conversation in this podcast on everything from cyber 9/11 doomers to the effective use of cybersecurity as an intelligence weapon in lieu of boots-on-the-ground collection activities.

Germany Doxes “UNKN,” Head of RU Ransomware Gangs REvil, GandCrab by Brian Krebs

I haven’t heard the words UNKN, REvil or GandCrab in many years! The wheels of justice grind slowly but grind fine, and it looks like German authorities are joining the fray, along with UNKN and co-conspirators. For those unfamiliar with REvil, it was the O.G. ransomware gang that moved the cybercrime industry from small-scale attacks for a few hundred to a few thousand dollars to a cartel-like operation that claimed to extort over two billion dollars.

🔗 Open Source

Blevene/structured-analysis-skill

Claude plugin for performing structured analysis techniques used by organizations like the CIA and the U.S. intelligence community. This is super useful for people using Claude Code as a threat intelligence research aid. You can instruct your session to use the plugin or skills for everything from attribution and intelligence writing to malware analysis.

Maybe I’m an intel nerd, but I do think a lot of people or companies who write blog posts on threat research could use a toolset like this as a gut check before they start throwing out wild claims to grab attention.

Wiz’s repository for their SITF supply chain site is listed above in State of the Art.

With all the OSS supply chain attacks happening, I think it’s important for security engineers to become more knowledgeable about the OSS ecosystem. For example, how are new packages published or updated, and where can you get better visibility in the upstream publishing process and into how your organization consumes these packages?

The Elastic Security team made that a little easier with a fully packaged open-source tool that monitors PyPI and npm for new packages and package diffs. It normalizes them and feeds them into a Claude prompt for analysis and subsequent alerting.

To continue the supply chain security awareness story, iron-proxy helps prevent data exfiltration or command and control call-outs by injecting a workload on top of your CI/CD pipeline to do network monitoring and egress blocking. It specifies that it can be used for any workload, so theoretically you can run this on top of a developer container or a cloud machine, but IMHO it should shine in test runners within CI/CD pipelines.

L0p4Map is a network scanning tool with a quite stunning front end. I think something like this would be useful in your network, where it can scan for devices, fingerprint them, and perform basic vulnerability scanning to help you understand how an attacker might probe your network for lateral movement.