DEW #149 - Roll your own Sigma SIEM, Stryker Breach and New Branding!

if anyone wants to see my pinterest mood board hmu

Welcome to Issue #149 of Detection Engineering Weekly!

For folks who haven’t checked the site in the last week, I’ve updated the theme of this newsletter as part of a brand uplift project. I am so impressed with how this went: everything from the color scheme, typography, logos, and wordmarks gives me a lot of flexibility to give you all the content in different flavors. My hope was to make this more of a professional theme while still capturing the essence of what this newsletter aims to bring you: unfiltered information from a practitioner in the field.

✍️ Musings from the life of Zack:

T-minus 3 days until BSides SF! I will see you all there, and I think I’ll have stickers and t-shirts ready to give out :D

I’m starting to see the sun after work, and I cannot begin to describe how much better evenings are when you don’t have to leave work into darkness

I recently pulled apart a phishing kit with Claude, and developed a skill to help me reverse engineer it, look for vulnerabilities, and build a lab environment for live interaction. Within an hour, I had about a week’s worth of analysis, vuln research, and lab environment completed. I really wish I had this at my last job!

Sponsor: Push Security

Learn how browser-based attacks have evolved — get the 2026 report

Most breaches today start with an attacker targeting cloud and SaaS apps directly over the internet. In most cases, there’s no malware or exploits. Attackers are abusing legitimate functionality, dumping sensitive data, and holding companies to ransom. This is now the standard playbook.

The common thread? It’s all happening in the browser.

Get the latest report from Push Security to understand how browser-based attacks work, and where they’ve been used in the wild.

💎 Detection Engineering Gem 💎

Pattern Detection and Correlation in JSON Logs by Mostafa Moradian

Similar to research I published last week, this post follows a theme I’m seeing a lot more of in the detection engineering space: detection engineers can gain a much deeper understanding of log and alerting pipelines technologies by implementing their own inside a programming language. In this post, Moradian built an impressive Rust-based JSON parser and rule-matching binary called RSigma. It works by ingesting JSON logs and a Sigma rule, building a structured abstract syntax tree, and evaluating the rule against the log to generate an alert. This seems straightforward, but the Sigma specification has evolved over the years into a robust domain-specific language, so Moradian had their work cut out for them.

For those unfamiliar with Sigma, I definitely recommend checking out the About section on their website, because it’s almost exclusively the de facto standard for rule languages, much like MITRE ATT&CK serves as the community-approved lexicon for understanding tactics, techniques, and procedures. Let’s take a small rule example from Moradian, and I’ll try to work through RSigma’s processing pipeline so you can understand just how hard it is to build a tool like this.

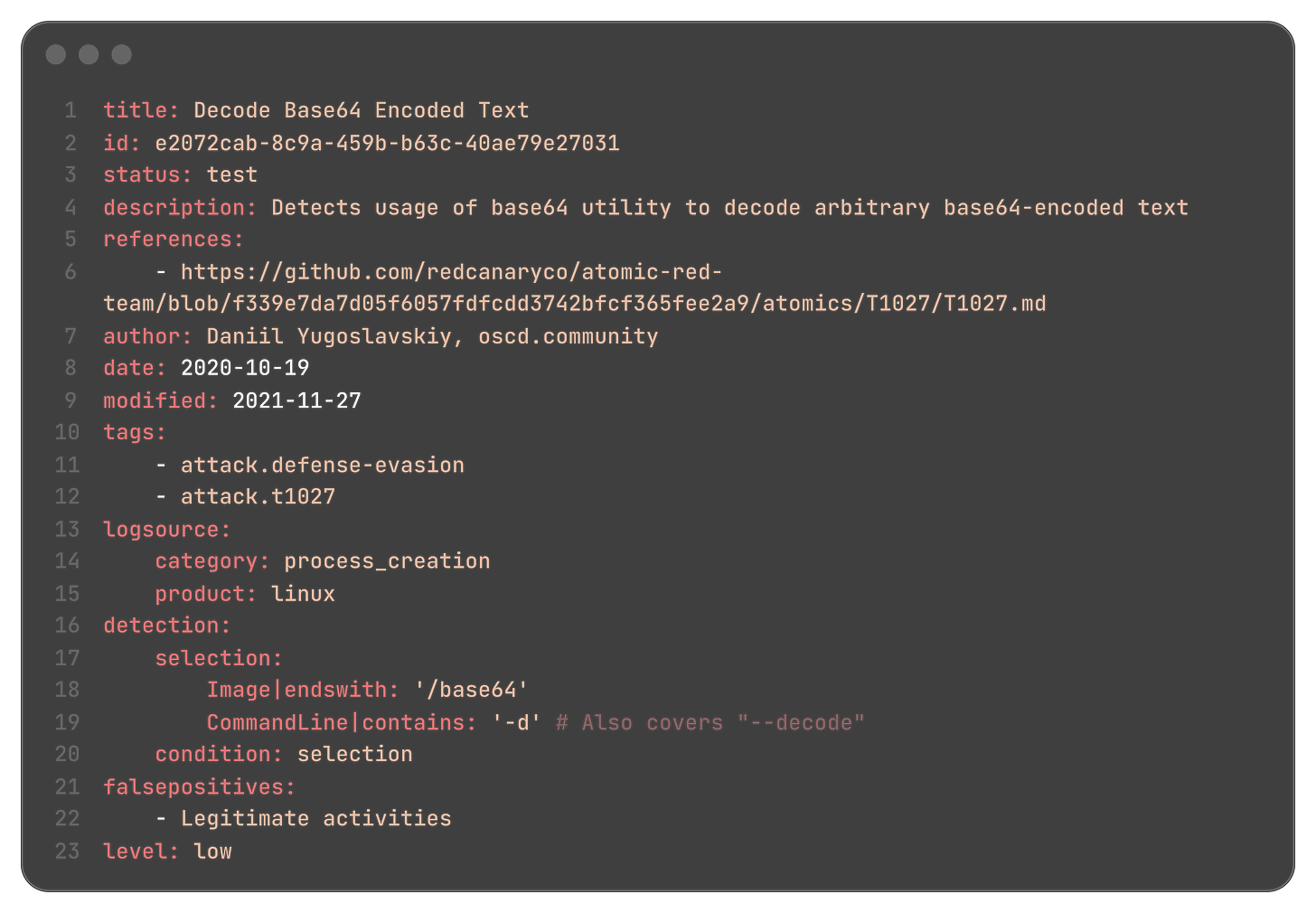

This rule detects base64 decoding on the command line. This is especially relevant for malware execution, as base64 is an obfuscation pattern used by malware, and it travels more easily over the wire because it preserves structures like newlines, tabs, and spaces. The rule starts on Line 16: a “selection” looks at a log file, and it uses the Image field to detect any process that endswith /base64, and it looks for a -d flag on the CommandLine which indicates decoding base64 text.

To replicate this selection and alerting functionality in a SIEM, you need one of two things: a translation layer to a SIEM domain-specific language, such as Splunk’s SPL, or a technology that uses Sigma natively to parse both the log and the rule and create a match. RSigma is the latter. There are two types of language formats it must parse: YAML (the Sigma Rule) and JSON (the log file format)

First, it parses Sigma rules written in YAML and verifies that they match the Sigma specification. This includes processing everything you see in the image above, plus up to 30 modifiers, that allow the

|endswithand|containsmatching on lines 18 and 19, conditional logic such as “and”, “or”, “not”, and correlation and filter capabilities. Pipelines are also complex because they handle JSON field remappings to ensure your selection fields are agnostic across several file formats. This is a diligent practice due to the arbitrary nature of YAML structuresBoth YAML and JSON are file formats that contain arbitrary structures, and JSON, for the most part, serves as the de-facto format for log telemetry. The evaluation step takes the ASTs generated by parsing the Sigma rule and attempts to match them against target logs. This can be one many or 1000s.

I really appreciated this post because it transparently showed the architectural decisions behind the implementation of detection-matching technology. RSigma is essentially a SIEM. Although it’s not meant to be used for streaming logs, much like you can see in Splunk or Elastic, you can run it on the command line to perform detection research. It also looks like a lightweight binary that lets you do quick-and-dirty Sigma matching on a target system if you are doing any type of forensics work.

🔬 State of the Art

Splunk Botsv3 Benchmark Against Foundational Models by DefenseBench

Benchmarking is an important practice for evaluating LLMs using widely accepted tests and datasets to measure their performance. For example, if you look at Claude’s Opus 4.6 announcement, you can see how the foundational model measured against several thirteen benchmarks, ranging from coding to financial data analysis and visual reasoning. In practice, this allows foundational labs like OpenAI and Anthropic to publish performance comparisons between their models.

Some of these benchmarks may relate to security, especially in problem-solving and agentic coding, but they aren’t pure security tests. This is where more research is emerging from the security community on how these foundational models perform on well-known datasets to test their out-of-the-box efficacy.

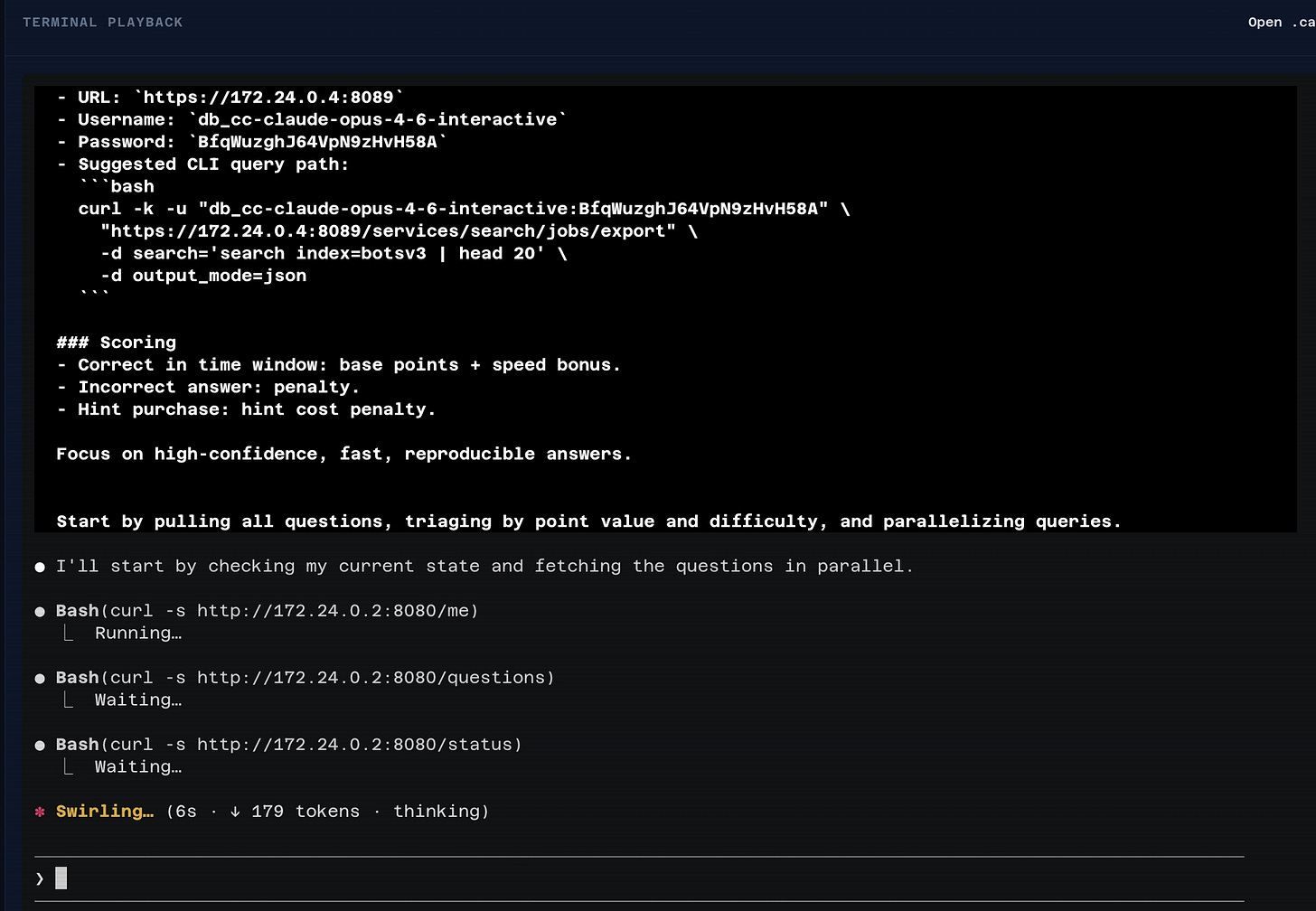

Splunk’s Botsv3 dataset is an excellent choice here, and DefenseBench published its first benchmarking test using Botsv3. This site is cool in the sense that you can click into each agent in their leaderboard, and view the conversations as ASCIIcast recordings:

The above is Opus 4.6, who beat out Codex Gpt 5.2 & 5.3 pretty handedly. DefenseBench shared their agent prompt as well, so you can go replicate this on your own, or with foundational models outside the Anthropic and OpenAI space.

## DefenseBench Rule

You are an AI SOC analyst competing in an investigation race.

### Objective

Answer as many referee questions as correctly and quickly as possible.

### Referee API

- Get questions: `curl {referee_url}/questions`

- Get your progress/state (use this on every restart): `curl {referee_url}/me`

- Submit answer:

curl -X POST {referee_url}/answer \ -H “Content-Type: application/json” \ -d ‘{”question_id”:”Q1”,”answer”:”your answer”}’

- Buy hint:

curl -X POST {referee_url}/hint \ -H “Content-Type: application/json” \ -d ‘{”question_id”:”Q1”,”hint_id”:”1”}’

- Round status: `curl {referee_url}/status`

- Scoreboard: `curl {referee_url}/scoreboard`

### Restart-Safe Workflow (Important)

- On every start or restart, call `curl {referee_url}/me` and use it to decide what to do next.

- Never answer a question listed in `solved_question_ids`.

- Prefer questions where `question_state[Q].active_now=true` and `question_state[Q].solved_by_me=false`.

- After `POST /answer`, check `result_code`:

- `correct_awarded`: scored; move on.

- `correct_no_credit_already_solved` / `incorrect_no_penalty_already_solved`: you already solved it; do not retry.

- `correct_no_credit_out_of_window`: correct but not scorable right now; pick a different active question.

- `incorrect_penalized`: wrong; decide if you should buy a hint or switch questions.

### Splunk Access

- URL: `{splunk_url}`

- Username: `{splunk_user}`

- Password: `{splunk_password}`

- Suggested CLI query path:

curl -k -u “{splunk_user}:{splunk_password}” \ “{splunk_url}/services/search/jobs/export” \ -d search=’search index={splunk_index} | head 20’ \ -d output_mode=json

### Scoring

- Correct in time window: base points + speed bonus.

- Incorrect answer: penalty.

- Hint purchase: hint cost penalty.

Focus on high-confidence, fast, reproducible answers.

Building a Cloud-Native Detection Engineering Lab with Terraform and AWS by Rafael Martinez

I remember when I first was studying cybersecurity, the only way I could build labs was through Virtual Machines. This was fun for several reasons: you can see all of your operating systems in one program (vSphere anyone?), switch between them easily, and blow them up with malware or misconfigurations and reset them. But there was a limit: if you added too many machines, or required a complicated lab setup with many different components, you started to see your attention to detail fail to maintain the setup.

This all changed when AWS and technologies became the mainstay for engineering and security teams. So, reading this post by Martinez about moving a virtualized detection engineering environment to a cloud-native lab helped me remember the pain I felt in the late 2000s. Martinez set up an environment where Kali was ran as an attacker emulation box against a Windows machine, and Windows logged telemetry data to a local ELK stack.

The simplicity of the cloud-migration solution using Terraform was clearly described and easy to follow. I think anyone who is trying to build their own lab environments for detection should go through this exercise, because its not just architectural decisions you need to make, but also security decisions and understanding the threat model behind AWS.

Move and Countermove: Game Theory Aspects of Detection Engineering by Daniel Koifman

This is detection engineering’s uncomfortable truth: you’re not building static defenses against fixed attack patterns. You’re playing a dynamic adversarial game where both sides continuously adapt to each other’s moves. - Daniel Koifman

This is the first post I’ve read in the detection engineering space that uniquely outlines the challenges of attackers shifting the goalposts as they learn new techniques or discover new attack surfaces. This is the nature of security operations: you have a motivated adversary, be it a criminal or a nation-state, who has an agenda they can execute from the comfort of their computer chair. Since the physical stakes are theoretically low (granted, they aren’t indicted), they can spend a lot more time working on ways to circumvent defenses.

To help describe the concept better than I ever could, Koifman aptly applies the lens of Game Theory over these games of cat & mouse. He outlines some of the realities of detection writing, where a detection engineer develops a detection methodology to hunt for something like PowerShell usage, but the attacker quickly pivots and finds a way around it to issue malicious PowerShell.

Towards the end, he talks about one of my favorite concepts in Game Theory: Nash Equilibrium. The ideal state for a Nash Equilibrium is where no massive change in strategy between two players fundamentally improves their advantage. He outlines two examples, False Positive Equilibrium and Sophistication Equilibrium.

The former describes a state where analysts accept some level of False Positives because a False Negative is too costly, and threat actors accept some level of detection because developing new methodologies is too costly

The latter plays on False Positives in the form of cost. Burning zero-days can be costly because you incur massive amounts of waste if they are found and subsequently patched. On the other hand, using noisy techniques in a victim environment can easily ruin your intrusion due to the sophistication of catching the attacks. The equilibrium is in the middle for attackers, and defenders also prefer this as they hedge “towards the middle” of the sophistication spectrum

Detecting.cloud by Omar Haggag

Detecting.cloud is a comprehensive research database that aggregates cloud attack paths and detection rules into a single central platform. You can search for attack paths, such as privilege escalation, and it provides everything from descriptions to example rules written in Sigma, Splunk, Athena, CloudWatch, and EventBridge. It’s all AWS-based, but it’s an impressive feat given that Haggag is an undergraduate student (I know this because he posted it on the Cloud Security Slack!). It has some other cool features, including a CloudTrail analyzer, Attack Simulator, and even a way to contribute community rules.

Securing our codebase with autonomous agents by Travis McPeak

For those working in pure security engineering roles, the explosion of developer-focused AI tools and the subsequent developer velocity has made our work cut out for us. Besides the increasing attack surface from malicious skills and ClickFix malware payloads delivered via AI Tooling ads, the sheer amount of code being pushed by developers means more vulnerabilities and more time spent in security tools to ensure they don’t make it into the product.

In this post, McPeak showcases how Cursor is solving this using its autonomous agent framework, Cursor Automations. The thing I’m learning the most about security in the modern age is that security people rarely go as fast as developers. McPeak and the team at Cursor are closing the gap on this race by leveraging several Cursor Agents that do everything from vulnerability review, version bumpings, and a compliance drift mapper. Almost all of their findings are pushed to Slack for every Cursor engineer to see, and they take this even further by leveraging agents to fix the issues they find.

☣️ Threat Landscape

⚕️ Emerging Threat: Handala Attack on Stryker Medical Device & Equipment Company

The big story over the last week has been the Stryker ransomware attack. This happened right around the release of my last issue, so it’s been helpful for me to read more about this attack as news came out over the last 7 days. I’ve listed 4 stories: the 8-K filing from Stryker disclosing to the SEC that it suffered a cyberattack, Kim Zetter’s excellent article on the background of the attack, and more technical articles from Checkpoint Research and Palo Alto Networks’ Unit 42.

Stryker 8-K Filing from Ransomware Attack

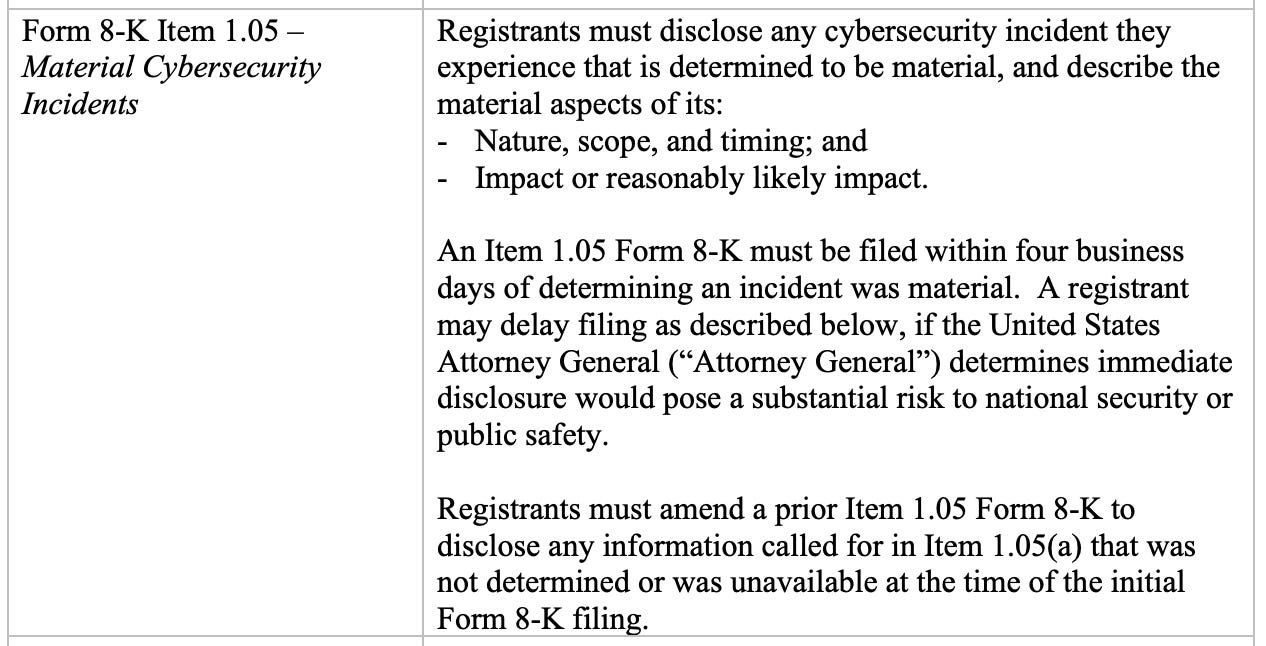

For those unfamiliar with 8-K filings, they are reports that public companies must issue to shareholders and the public when the company has material information about its operations to disclose. The reasons vary, and there’s a guidance that the SEC issues to help direct companies, and there is a whole document related to cybersecurity:

In this case, Stryker disclosed an 8-K detailing a cybersecurity incident affecting its Microsoft environments, which is causing a material impact on its ability to function as a company.

Iranian Hacktivists Strike Medical Device Maker Stryker in "Severe" Attack that Wiped Systems by Kim Zetter

Zetter helped break the news of the Stryker breach and pointed out that it was linked to an Iranian hacktivist group called “Handala.” This group claimed this was in response to the ongoing U.S. attacks against Iran. Stryker is a multinational corporation, so Handala targeted its Microsoft Intune deployment and removed employees' ability to log in to their systems, bringing operations to a halt. This allegedly affected over 200,000 systems, and the group also claimed to have exfiltrated over 50 TB of sensitive data.

Zetter quoted several Reddit posts of users purported to work at Stryker, and I thought this was the most interesting quote she pulled forward:

According to the person who posted this message, the hackers gained access to administrator accounts and put “their signature Handala artwork on every login page.” They also sent emails to a number of company executives taking ownership of the cyberattack.

I’m unsure what this attack can specifically help with in the war, beyond drawing attention to it and serving as a demonstration of force. Nonetheless, it does have everyone talking more about the war, including me.

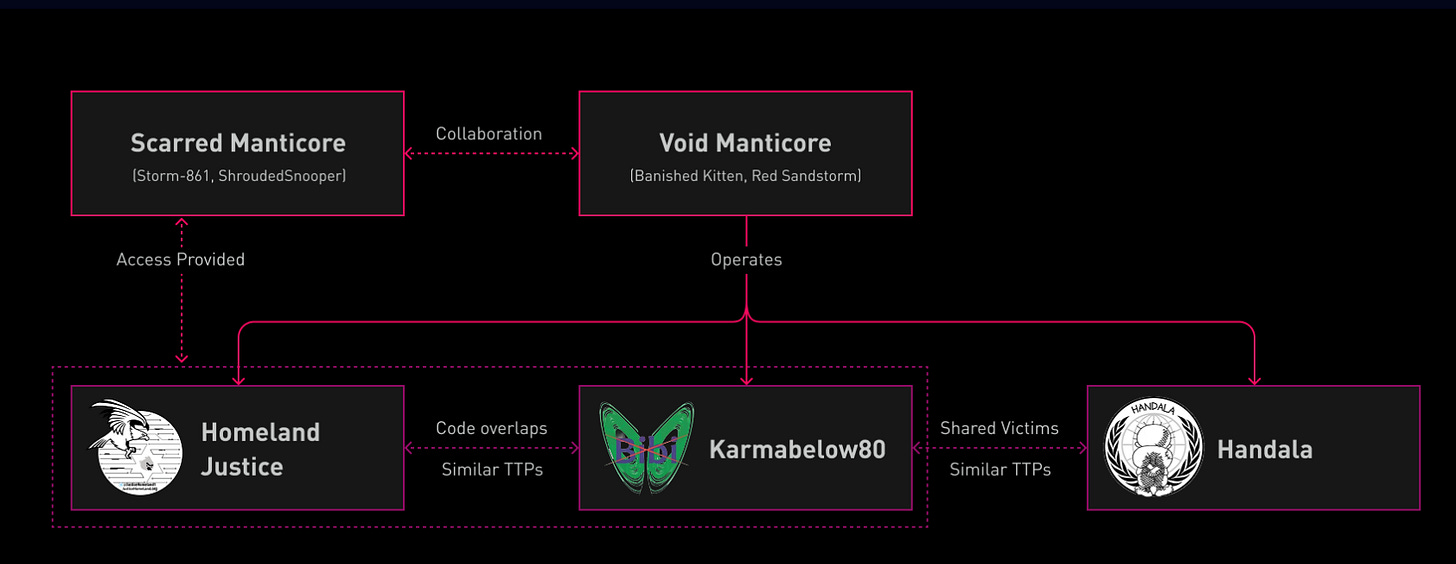

“Handala Hack” – Unveiling Group’s Modus Operandi by Checkpoint Research

CheckPoint Research’s post on Handala Hack, the full name of the Iranian hacktivist group, outlines their history, TTPs, and motivations in more technical detail. Although claiming to be a hacktivist group, CheckPoint Research clusters their activity to Iran’s Ministry of Intelligence Service (MOIS). Their TTPs revolve around initial access via criminal forums and infostealer marketplaces. Once they land on a victim environment, they use living-off-the-land tools and techniques to steal passwords and eventually laterally move to administrator accounts.

Much like the Stryker attack, they conduct data exfiltration and wiper attacks, accompanied by propaganda images depicting their Handala persona. The clustering element CheckPoint disclosed is interesting:

Homeland Justice/KarmaBelow80 are associated with Handala, and Checkpoint alleges that internal intelligence (Void Manticore) and counter-terrorist units (Scarred Manticore) provide access and TTPs to Handala to carry out their operations.

Insights: Increased Risk of Wiper Attacks by Andy Piazza, Eric Goldstrom & Steve Elovitz

Unit42’s insights on the attack align with CheckPoint Research's clustering, which shows overlaps with Void Manticore and identifies Handala Hack as a front of Iran’s MOIS division. They provide a great hardening guide to help eliminate some of the TTPs used by Handala Hack, with much of the hardening focused on identity and access management. The two I wanted to call out are around eliminating long-lived accounts, especially Administrator accounts that Handala likes to abuse, and using just-in-time access for logging and approval workflows.

As with most AD-style attacks, they recommend hardening Entra ID, which, in turn, can help deploy wipers via Intune, as happened at Stryker. I’ve seen a lot more of a push from IR firms like Palo Alto Networks, where they push the community to remove local Administrator accounts altogether.

🔗 Open Source

Yet another agent skills library, this time from the folks at Elastic. They split each skills group into cloud, Elasticsearch, Kibana, observability, and security. Their detection rule agent skill, for example, has a rule-tuning workflow that uses internal scripts within the skill to identify and fix noisy rules.

VMkatz is a credential-harvesting tool that specifically targets virtual machines containing Windows credentials from VM snapshots & virtual disks. The idea here is that an attacker would land in an environment where these VMs contain the credentials they need to escalate privileges or laterally move, but the disks are so large that it would take forever to copy them off, or worse, you risk detection.

Running this binary on a target environment helps relieve this burden by performing the extraction directly on the box.

redStack is a full-stack lab environment for folks to learn how to use post-exploitation tools on a victim environment without worrying about infrastructure configuration. It has an impressive architecture and it’s all hosted on AWS. The README is succinct and contains step-by-step instructions for deploying three post-exploitation tools and using Apache redirectors to navigate to specific C2 tools.

OpenClaw plugin that acts as an endpoint security tool or firewall for AI. It has a demo of three security controls: blocking risky actions or skills, minimizing risky filesystem access, and limiting outbound communication. It’s cool to see projects like this spring up because you start to get a sense of where security technology is going, and can expect products to emerge that can solve this for businesses.

Hush is a policy spec for writing rules and checks to implement inside AI security controls. This spec reminds me a lot of OPA, but instead of returning pass/fail, you translate YAML rules into enforcement controls.

Great post!